How to Run DeepSeek Locally — The Best Open Reasoning Model

Run DeepSeek R1 on your own computer. Known for chain-of-thought reasoning, math, and coding — it is one of the most capable open-source models available today.

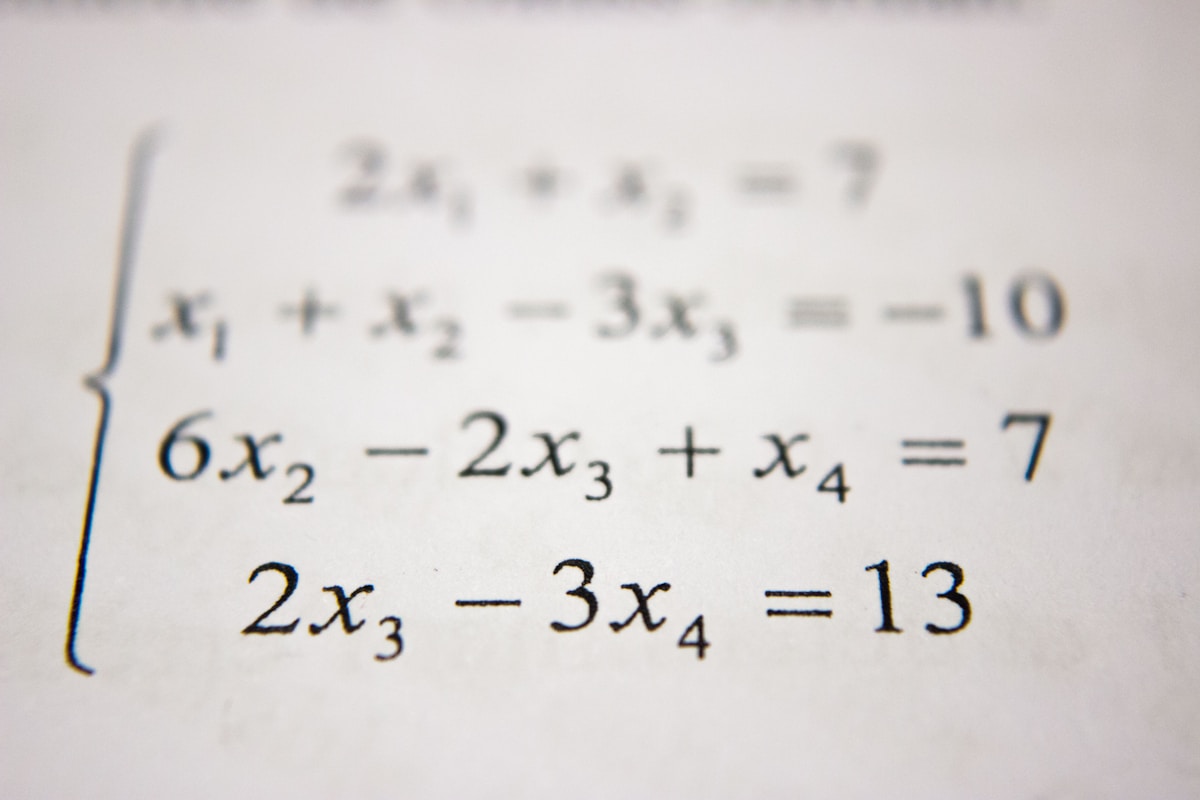

DeepSeek R1 is a family of reasoning-focused AI models. Unlike standard chat models, DeepSeek shows its thinking process — breaking down complex problems step by step before giving an answer. It's particularly strong at math, coding, and logical reasoning.

What Makes DeepSeek Different

DeepSeek R1 uses chain-of-thought reasoning. Before answering, it works through the problem internally. Here's what a typical interaction looks like:

You: What is 15% of 847?

Thinking... Let me calculate 15% of 847. 10% of 847 = 84.7. 5% of 847 = 42.35. 15% = 10% + 5% = 84.7 + 42.35 = 127.05

Answer: 15% of 847 is 127.05.

This makes DeepSeek significantly more accurate at complex tasks compared to models that answer directly.

Available Models

| Model | Size (Q4) | Min RAM | Best For |

|---|---|---|---|

| DeepSeek R1 1.5B | ~1.1 GB | 4 GB | Basic reasoning |

| DeepSeek R1 8B | ~4.9 GB | 8 GB | Math, coding, reasoning |

| DeepSeek R1 14B | ~9.0 GB | 16 GB | Advanced reasoning |

| DeepSeek R1 32B | ~20 GB | 32 GB | Professional use |

| DeepSeek R1 70B | ~42 GB | 64 GB | Maximum quality |

Recommendation: DeepSeek R1 8B is the best starting point for 8GB RAM.

Quick Start with Ollama

# Install Ollama

curl -fsSL https://ollama.com/install.sh | sh

# Run DeepSeek R1 8B

ollama run deepseek-r1:8b

# Try smaller sizes for lower-spec devices

ollama run deepseek-r1:1.5bWhat DeepSeek R1 Excels At

Math and Calculation

>>> A rectangular garden has a perimeter of 60 meters.

The length is 5 meters more than the width.

What are the dimensions and the area?

**Thinking...**

Let width = w, length = l = w + 5

Perimeter = 2(w + l) = 60

2(w + w + 5) = 60

2(2w + 5) = 60

4w + 10 = 60

4w = 50

w = 12.5

l = 17.5

Area = w × l = 12.5 × 17.5 = 218.75

**Width:** 12.5 meters

**Length:** 17.5 meters

**Area:** 218.75 square metersCoding

>>> Write a function to find the longest palindrome in a string

**Thinking...** I'll use expand-around-center approach.

For each position, expand both odd and even length palindromes.def longest_palindrome(s: str) -> str:

if not s:

return ""

def expand(left: int, right: int) -> str:

while left >= 0 and right < len(s) and s[left] == s[right]:

left -= 1

right += 1

return s[left + 1 : right]

result = ""

for i in range(len(s)):

odd = expand(i, i)

even = expand(i, i + 1)

result = max(result, odd, even, key=len)

return result

### Logical ReasoningIf all roses are flowers, and some flowers fade quickly, can we conclude that some roses fade quickly?

Thinking... This is a classic syllogism:

- All roses are flowers (roses are a subset of flowers)

- Some flowers fade quickly

- Can we conclude some roses fade quickly?

No, we cannot. The "some flowers" that fade quickly might all be non-roses. The premises don't guarantee any overlap between roses and the quickly-fading flowers.

No, we cannot conclude that. The "some flowers" that fade quickly could all be non-roses. The premises don't establish that any roses are among the quickly-fading flowers.

## Understanding the Think Tags

DeepSeek R1 outputs reasoning in think tags. This is normal behavior — it's showing its work.

- The thinking process doesn't appear in most API responses

- It adds latency but dramatically improves accuracy

- For simple questions (greetings, basic facts), the thinking is brief

- For complex problems, the thinking can be quite long

## Performance Tips

- **Be patient** — reasoning models take longer to respond, but answers are more accurate

- **Give clear, specific prompts** — DeepSeek performs best with well-defined problems

- **Ask for step-by-step** — even though it reasons internally, asking explicitly often improves output

- **Use for complex tasks** — for simple chat, a standard model like Llama may be faster

## When to Use DeepSeek vs Other Models

| Task | Best Model |

|------|-----------|

| Math calculations | DeepSeek R1 |

| Logical reasoning | DeepSeek R1 |

| Complex coding | DeepSeek R1 or Qwen 2.5 |

| Quick chat | Llama 3.1 or Mistral |

| Multilingual | Qwen 2.5 |

| Low RAM (4GB) | Llama 3.2 3B |

## Summary

DeepSeek R1 is the go-to model for tasks that require careful reasoning. Its chain-of-thought approach makes it significantly more accurate at math, coding, and logical problems. The 8B model runs well on standard hardware with 8GB RAM.

## Next Steps

- [Best Models for 8GB RAM](/blog/models-for-8gb-ram) — compare with other models

- [How to Run Qwen Locally](/blog/how-to-run-qwen-locally) — another top coding model

- [How to Run Llama Locally](/blog/how-to-run-llama-locally) — the popular general-purpose modelMore Posts

Private AI Setup Guide — Run AI Completely Offline in 2026

TutorialA step-by-step guide to setting up a fully private, offline AI system. No data leaves your machine — covers model selection, tools, and privacy best practices.

Mac M1/M2/M3 LLM Compatibility — What Can Your Mac Run?

GuideA complete guide to running AI models on Apple Silicon Macs. Which models work on M1, M2, and M3 chips, how much RAM you need, and real performance benchmarks.

Local AI Fine-Tuning Guide — Customize Models with LoRA and Quantization

TutorialLearn how to fine-tune open-source LLMs on your own hardware using LoRA, and understand quantization formats like GGUF, AWQ, and GPTQ to optimize performance.